- Home

- Weddings

- Portraits

- Journal

- Contact

- Keygen akvis sketch 15-0 x64

- Uncharted nathan drake

- Adobe master collection cs4 download

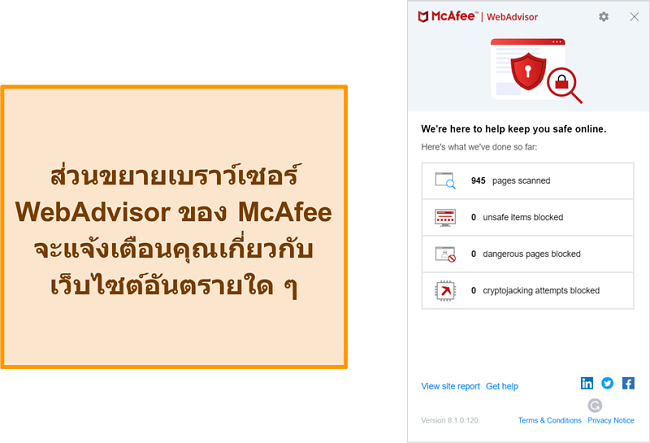

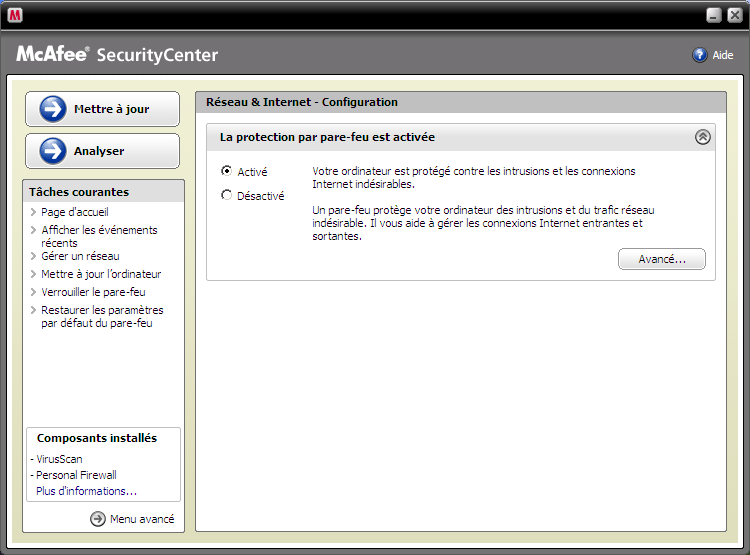

- Pare feu mac afee

- Max boost wifi booster

- Highest grossing movies inflation adjusted

- Fulbright tr into the dead 2

- 90210 season 5 episode 1 123movies

- Mac miller weekend download hiphopearly

- Skse mem patch

- How to open xln addictive drums in kontakt

- First class song actress

- Gba emulator windows

- Ramayana character

- Dot matrix printer papers please game

- Multi theft auto server virus

- Follower bearing arm for hesston 1014

- Latest telugu dengudu kathalu

- Can i run it deus ex mankind divided

- Jay sean ride it hindi lyrics

- Chopper builders handbook download

- Oracle 10g installation

- Win to flash serial key download

- Domain driven design bounded context

- Tovino thomas ennu ninte moideen

> name within that path, and R + RStudio will need to be able to read and The R session directory is constructed using a unique directory > within 'C:\Users\grbortz\AppData\Local\Temp\, if that is not happening On Wed, at 7:53 PM, Kevin Ushey You will need to disable the firewall on all directories constructed

Pare feu mac afee windows#

Windows firewall, which can be controlled. This does not occur with Defender apparently as that still uses the This does not occur with Defender apparently as that still uses the windows

Pare feu mac afee full#

, where the user does not have FULL rights, and where Mcafee is used), ,subject to corporate rules (controlled by a central network adminstrator Is the no way Rstudio can produce a workaround. This is most unfortunate as it may end my attempt to get to use Sparkly R As soon as we disable it entirely theĬode on the Tutorial home page runs perfectly. We know its the Mcafee Firewall as I am told that Mcafee disables the That should be run by rstudio when the connection is set up but is beingīlocked from execution and where this executble may lie.

The local Mcafee expert asked if perhaps there is an exe file We will be getting support from Mcafee to see why the firewall is stillīlocking. However it is still giving a connection problem anf The strange thing is that Mcafee Picked up all the directories mentionedĪnd that these directories are blocked ie the Hadoop / Spark directoriesĪnd the Temp directory. Same, but the second is changing for each execution!

Pare feu mac afee free#

We also notice that e ports are different each session and the McafeeĬontroller has to also free up ports, but if they are dynamic and uniqueįor each session this is impossible. Do you not see this as a problem for the deployment The case? By scaling R using Spark, is it not the intention to use R at Subject to corporate rules.and normally at enterprise level this would be Mcafee on startup? Is this not going to be a problem for everybody that is Same or are they created on each new spark session so they wont be seen by Therefore have to Prename the temp sub directories that need to be unlockedĪnd the files that spark creates. Not allow this as this directory is prone to virus attack. He states that even if it did work the company would WE CANNOT DISABLE MCAFEE/FIREWALL OVER THE WHOLE PC.THE COMPANY WONT ALLOW THIS! It works when we do this. Please inform me of the full directory list and files that I need to disable Mcafee antivirus/ Firewall on to get this to work. Path: C:\Users\grbortz\AppData\Local\rstudio\spark\Cache\spark-2.0.2-bin-hadoop2.7\bin\spark-submit2.cmd

"C:\Users\grbortz\AppData\Local\rstudio\spark\Cache\spark-2.0.2-bin-hadoop2.7\tmp\hadoop"Įrror in file(con, "r") : cannot open the connectionĬannot open file 'C:\Users\grbortz\AppData\Local\Temp\RtmpyQvjkq\file9f06da6313_spark.log':įailed while connecting to sparklyr to port (8880) for sessionid (5627): Gateway in port (8880) did not respond. "C:\Users\grbortz\AppData\Local\rstudio\spark\Cache\spark-2.0.2-bin-hadoop2.7" We tried to disable the firewall to only the following directories He can only disable the firewall to particular directories.not to the Temp directory as a whole !, only files or subdirectories in the Temp.(company rules ) On disabling the firewall/Mcafee Virus completely, the program Sparkly R, works without problem!!! But at the enterprise level this is not sustainable.